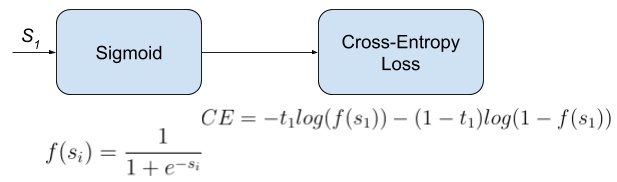

The forward method takes the model’s predicted outputs and the true targets as input. The constructor takes a list of class weights as input. In the above code, we define a custom loss function called RealWorldWeightCrossEntropyLoss. device ) loss = - weights * log_probs ), targets ] loss = torch. log_softmax ( inputs, dim = 1 ) weights = torch. class_weights = class_weights def forward ( self, inputs, targets ): log_probs = F. Module ): def _init_ ( self, class_weights ): super ( RealWorldWeightCrossEntropyLoss, self ). Import torch import torch.nn.functional as F class RealWorldWeightCrossEntropyLoss ( torch. To implement real-world-weight cross-entropy loss in PyTorch, we need to define a custom loss function that takes into account the class weights. How to Implement Real-World-Weight Cross-Entropy Loss in PyTorch Where w_i is the weight assigned to class i, y_i is the true distribution of class i, and y_hat_i is the predicted distribution of class i. The formula for real-world-weight cross-entropy loss is given by: In this loss function, each class is assigned a weight that is inversely proportional to its frequency in the dataset. Real-world-weight cross-entropy loss is a modified version of cross-entropy loss that takes into account the class imbalance in the dataset. What is Real-World-Weight Cross-Entropy Loss? To overcome this issue, we can use real-world-weight cross-entropy loss. In such cases, the model may become biased towards the majority class and perform poorly on the minority class. For example, in a medical diagnosis model, the number of healthy patients may be much higher than the number of diseased patients. In real-world scenarios, the distribution of classes may not be balanced. Where y is the true distribution and y_hat is the predicted distribution. The formula for cross-entropy loss is given by: It measures the dissimilarity between the predicted and actual probability distributions of classes. What is Cross-Entropy Loss?Ĭross-entropy loss is a popular loss function used in classification tasks. In this article, we will explore how to use real-world-weight cross-entropy loss in PyTorch to overcome this issue.

However, in real-world scenarios, the distribution of classes may not be balanced, which can lead to biased models. It is a popular loss function used in many deep learning models, including image classification, object detection, and natural language processing.

| Miscellaneous How to Use Real-World-Weight Cross-Entropy Loss in PyTorchĪs a data scientist or software engineer, you may be familiar with the concept of cross-entropy loss.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed